AI and Professional Responsibility in Australian Law: Redefining the Standard of Care in a Technological Era

Artificial intelligence is no longer an emerging concept in Australian legal practice.

It is embedded in drafting environments, discovery workflows, document review processes and internal knowledge management systems. AI is now part of how work is done, not merely an experimental add-on.

The conversation has matured.

It is no longer about whether AI can improve efficiency. That has largely been established.

The more pressing question is this:

What does professional responsibility, and more importantly, the standard of care look like in an AI-enabled legal practice?

For solicitors and barristers operating under the regulatory frameworks of Law Societies and Bar Associations across Australia and New Zealand, professional obligations are non-negotiable. Duties of competence, confidentiality, supervision, independence and candour to the court form the bedrock of practice.

AI does not dilute these duties.

It reshapes how they must be exercised.

It is not lowering the standard of care.

It is redefining it.

Competence Now Includes Technological Literacy

Traditionally, professional competence meant mastery of legal principles, procedural understanding and sound judgement.

Today, competence also includes understanding the tools influencing legal output.

A practitioner does not need to understand neural networks or machine learning architecture. However, they must understand that AI outputs are probabilistic, not definitive, that hallucinations and factual inaccuracies are possible, that prompts materially shape outputs, that source verification remains essential, and that over-reliance can create unseen risk.

These are not abstract concepts. They become tangible when applied to real legal workflows.

Platforms such as Quillio AI Legal Assistant are built to support lawyers in navigating these realities with practical safeguards and clear oversight.

Consider contract review in a commercial leasing matter.

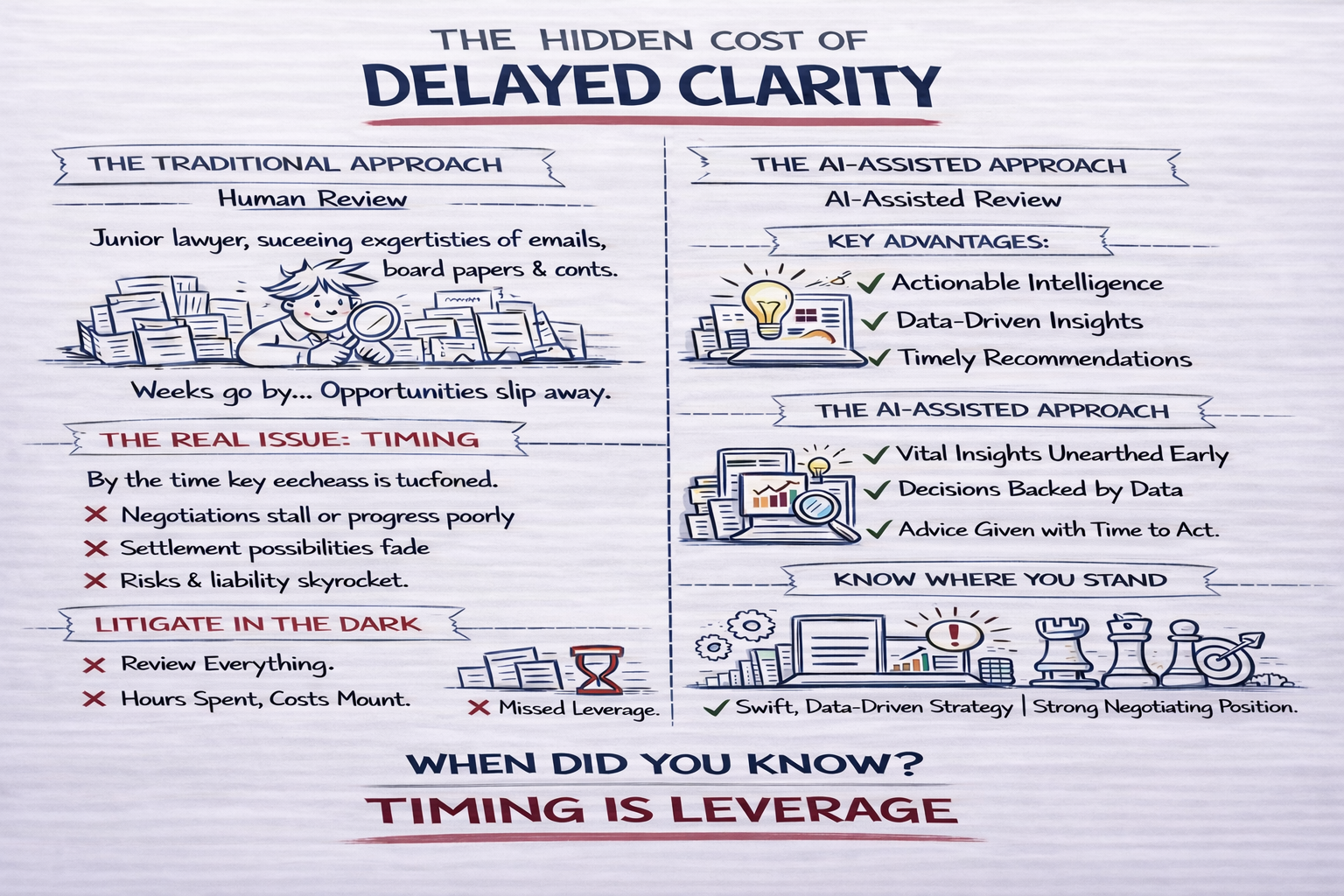

Under a traditional approach, a lawyer reviews each lease manually, identifies key clauses, flags risks and prepares a summary. This process is thorough but time-intensive.

In an AI-enabled workflow, the same set of leases can be analysed rapidly, with key provisions extracted and categorised.

Tools like Quillio AI Legal Assistant can help generate that structured first layer quickly while keeping the lawyer firmly in control of verification and judgment.

An initial output may identify rent review mechanisms, termination rights, assignment restrictions and indemnity clauses. It may also flag deviations from standard terms or provisions that create elevated risk exposure.

This is not final advice.

But it is a structured first layer of analysis.

The lawyer’s role is to validate, interpret and apply judgment, determining which risks are material, how they interact, and what they mean for the client’s position.

The shift is subtle but significant.

Competence is no longer defined by the ability to produce work from first principles alone.

It is defined by the ability to critically assess and stand behind the final output, regardless of how it was generated.

The failure to supervise AI-assisted work is not a limitation of technology.

It is a failure to meet the evolving standard of care.

The Standard of Care is Becoming More Exacting

AI accelerates legal work.

It does not reduce accountability.

Consider a regulatory compliance summary.

Traditionally, this would involve reviewing legislation, guidance material and internal documentation to produce a structured overview of obligations and risk areas.

With AI embedded into the workflow, a first-pass summary can be generated by synthesising relevant inputs.

Quillio AI Legal Assistant, for example, is designed to assist in this process while emphasising human verification and professional oversight at every step.

An output may outline applicable obligations, identify potential areas of non-compliance, and suggest priority actions.

For instance, it may highlight that a reporting obligation has not been met within statutory timeframes, or that certain activities fall within a licensing regime requiring further assessment.

Again, this is not the final advice.

It is a starting point.

But the implication is clear.

If a material issue is overlooked, the presence of AI does not excuse the omission.

The responsibility remains with the practitioner.

If anything, the standard becomes more demanding.

Because the expectation is no longer that lawyers spend time locating information.

It is that they exercise judgment in verifying and applying it.

Confidentiality, Data Control and Client Trust

Legal practice is built on trust.

Clients disclose sensitive financial, commercial and personal information under the expectation of strict confidentiality.

Australian-built solutions like Quillio AI Legal Assistant place strong emphasis on data sovereignty, governance, and transparency to help firms meet these obligations responsibly.

The integration of AI into legal workflows requires that this trust be actively maintained through deliberate governance.

Questions that may once have been implicit must now be explicitly addressed.

Where is the data processed?

Is it retained?

Is it used for further model training?

How are access controls managed internally?

What audit trails exist?

These are not technical questions.

They are professional obligations.

Responsible AI adoption requires the same level of scrutiny applied to any core infrastructure decision. Vendor assessments, data processing agreements, internal policy frameworks and staff training programs are essential governance mechanisms.

AI is not inherently incompatible with confidentiality.

But the standard of care now requires that confidentiality be demonstrable, not assumed.

Supervision in a Hybrid Workflow

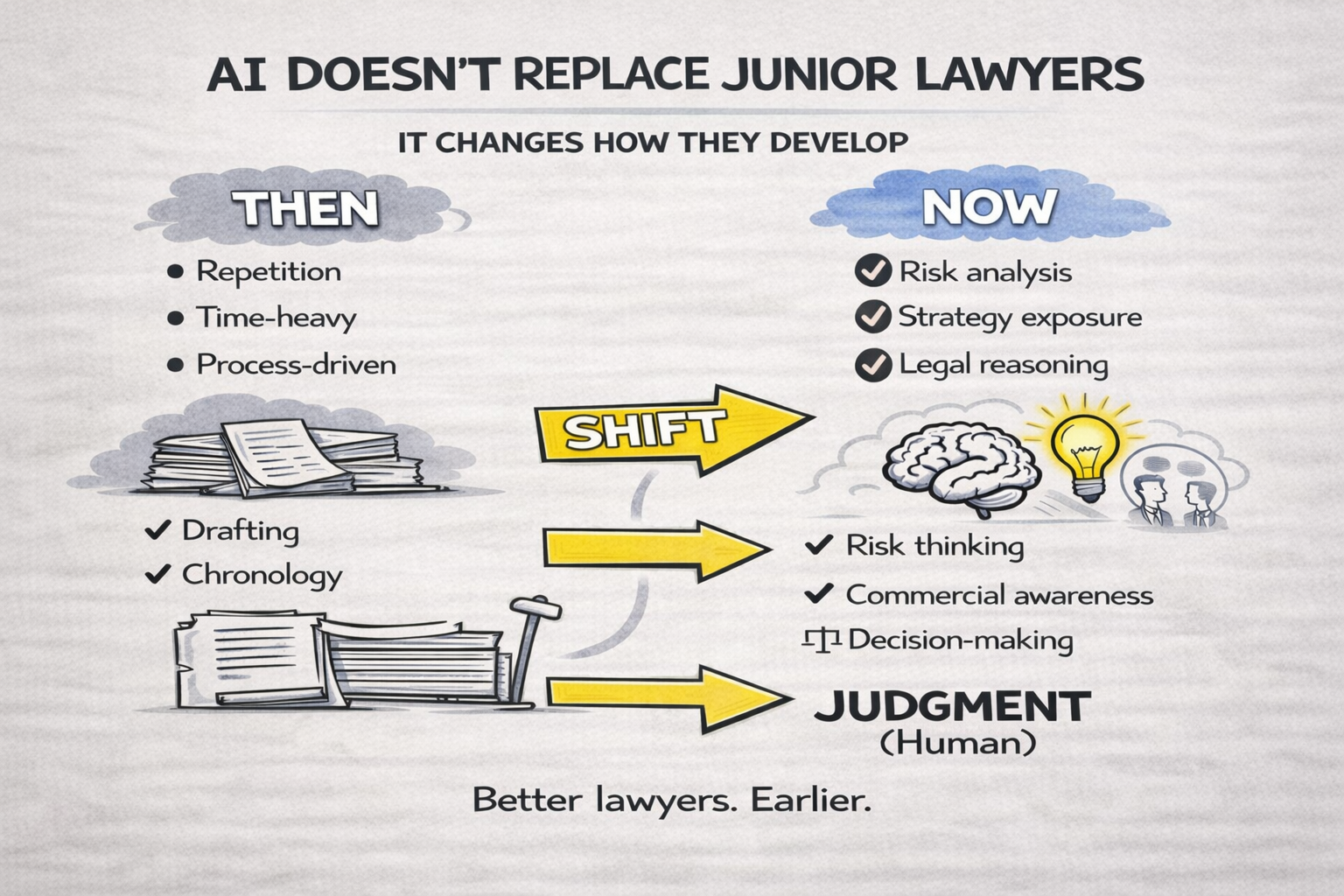

In traditional practice, junior lawyers’ work is reviewed by senior practitioners.

In an AI-enabled workflow, AI-generated outputs also require supervision.

However, the nature of that supervision changes.

Where the traditional focus may have been on structure, formatting or completeness, the emphasis now shifts towards reasoning, risk and strategic relevance.

The lawyer is no longer primarily correcting the form of the work.

They are interrogating its substance.

Legal reasoning must be tested.

Risk exposure must be assessed.

Interpretation must be validated.

AI changes where effort is applied.

It does not remove the need for oversight.

The lawyer remains accountable for the final product.

Professional responsibility cannot be delegated to software.

AI is an assistant, not an author.

Independence and Professional Judgement

Another subtle but important dimension of AI integration concerns independence.

Legal advice must remain free from improper influence. AI systems, while not intentional actors, generate outputs based on patterns derived from existing data.

This introduces a risk of generic reasoning.

Outputs may appear complete and persuasive, but lack the nuance required for a specific matter.

Practitioners must ensure that their analysis remains active and deliberate.

Professional judgement must not become passive acceptance.

AI can assist in structuring thought.

It cannot replace reasoning.

The Emerging Standard of Care

Technology has historically reshaped Australian legal practice.

Electronic filing, cloud systems and digital conveyancing each introduced new compliance expectations before becoming standard practice.

AI represents another inflexion point.

The emerging standard of care will not require avoidance of AI.

It will require its proper use.

Structured adoption.

Clear governance.

Active supervision.

Ongoing training.

Quillio AI Legal Assistant was developed precisely to support lawyers and firms in achieving this balanced, responsible integration.

Firms that fail to understand AI-related risk may fall short of evolving professional expectations.

Those that integrate AI thoughtfully may enhance both efficiency and reliability.

Professional responsibility does not weaken in the presence of AI.

It becomes more deliberate.

More visible.

And more exacting.

A Structural Opportunity for the Profession

There is also an opportunity embedded within this transition.

As AI reduces time spent on repetitive administrative tasks, drafting scaffolds, formatting, and document organisation, lawyers can redirect their focus towards higher-value work.

Strategic advisory roles.

Complex risk assessment.

Client counselling.

Commercial problem-solving.

In this sense, AI does not diminish the profession.

It sharpens it.

But only where it is used with discipline.

The Path Forward

The integration of AI into Australian legal practice is no longer theoretical.

It is operational.

The profession now faces a more sophisticated question:

How do we maintain and strengthen professional standards in an environment where technology accelerates output?

The answer lies not in resistance, nor in blind adoption.

It lies in governance.

In training.

In supervision.

In clarity.

AI will not define the future standard of care on its own.

The profession will.

And those who understand this shift will not simply adapt to it.

They will define what competent, responsible legal practice looks like in the years ahead.

Next Step

We invite readers to share their thoughts and experiences with AI in the comments section below. Whether you are sceptical, actively adopting, or simply observing how the landscape is evolving, these perspectives are critical to shaping the profession’s next phase.

For legal professionals considering practical implementation, tools such as AI Legal Assistant provide an example of how AI can be embedded within real workflows, not as a replacement for legal expertise, but as structured support that enhances how that expertise is applied.

Post a comment

You must be logged in to post a comment.